Tech News

The Humane AI Pin is the solution to none of technology's problems – Engadget

Engadget has been testing and reviewing consumer tech since 2004. Our stories may include affiliate links; if you buy something through a link, we may earn a commission. Read more about how we evaluate products.

I’ve found myself at a loss for words when trying to explain the Humane AI Pin to my friends. The best description so far is that it’s a combination of a wearable Siri button with a camera and built-in projector that beams onto your palm. But each time I start explaining that, I get so caught up in pointing out its problems that I never really get to fully detail what the AI Pin can do. Or is meant to do, anyway.

Yet, words are crucial to the Humane AI experience. Your primary mode of interacting with the pin is through voice, accompanied by touch and gestures. Without speaking, your options are severely limited. The company describes the device as your “second brain,” but the combination of holding out my hand to see the projected screen, waving it around to navigate the interface and tapping my chest and waiting for an answer all just made me look really stupid. When I remember that I was actually eager to spend $700 of my own money to get a Humane AI Pin, not to mention shell out the required $24 a month for the AI and the company’s 4G service riding on T-Mobile’s network, I feel even sillier.

In the company’s own words, the Humane AI Pin is the “first wearable device and software platform built to harness the full power of artificial intelligence.” If that doesn’t clear it up, well, I can’t blame you.

There are basically two parts to the device: the Pin and its magnetic attachment. The Pin is the main piece, which houses a touch-sensitive panel on its face, with a projector, camera, mic and speakers lining its top edge. It’s about the same size as an Apple Watch Ultra 2, both measuring about 44mm (1.73 inches) across. The Humane wearable is slightly squatter, though, with its 47.5mm (1.87 inches) height compared to the Watch Ultra’s 49mm (1.92 inches). It’s also half the weight of Apple’s smartwatch, at 34.2 grams (1.2 ounces).

Not only is the Humane AI Pin slow, finicky and barely even smart, using it made me look pretty dumb. As it stands, the device doesn’t do enough to justify its $700 and $24-a-month price.

The top of the AI Pin is slightly thicker than the bottom, since it has to contain extra sensors and indicator lights, but it’s still about the same depth as the Watch Ultra 2. Snap on a magnetic attachment, and you add about 8mm (0.31 inches). There are a few accessories available, with the most useful being the included battery booster. You’ll get two battery boosters in the “complete system” when you buy the Humane AI Pin, as well as a charging cradle and case. The booster helps clip the AI Pin to your clothes while adding some extra hours of life to the device (in theory, anyway). It also brings an extra 20 grams (0.7 ounces) with it, but even including that the AI Pin is still 10 grams (0.35 ounces) lighter than the Watch Ultra 2.

That weight (or lack thereof) is important, since anything too heavy would drag down on your clothes, which would not only be uncomfortable but also block the Pin’s projector from functioning properly. If you're wearing it with a thinner fabric, by the way, you’ll have to use the latch accessory instead of the booster, which is a $40 plastic tile that provides no additional power. You can also get the stainless steel clip that Humane sells for $50 to stick it onto heavier materials or belts and backpacks. Whichever accessory you choose, though, you’ll place it on the underside of your garment and stick the Pin on the outside to connect the pieces.

But you might not want to place the AI Pin on a bag, as you need to tap on it to ask a question or pull up the projected screen. Every interaction with the device begins with touching it, there is no wake word, so having it out of reach sucks.

Tap and hold on the touchpad, ask a question, then let go and wait a few seconds for the AI to answer. You can hold out your palm to read what it said, bringing your hand closer to and further from your chest to toggle through elements. To jump through individual cards and buttons, you’ll have to tilt your palm up or down, which can get in the way of seeing what’s on display. But more on that in a bit.

There are some built-in gestures offering shortcuts to functions like taking a picture or video or controlling music playback. Double tapping the Pin with two fingers will snap a shot, while double-tapping and holding at the end will trigger a 15-second video. Swiping up or down adjusts the device or Bluetooth headphone volume while the assistant is talking or when music is playing, too.

Each person who orders the Humane AI Pin will have to set up an account and go through onboarding on the website before the company will ship out their unit. Part of this process includes signing into your Google or Apple accounts to port over contacts, as well as watching a video that walks you through those gestures I described. Your Pin will arrive already linked to your account with its eSIM and phone number sorted. This likely simplifies things so users won’t have to fiddle with tedious steps like installing a SIM card or signing into their profiles. It felt a bit strange, but it’s a good thing because, as I’ll explain in a bit, trying to enter a password on the AI Pin is a real pain.

The easiest way to interact with the AI Pin is by talking to it. It’s supposed to feel natural, like you’re talking to a friend or assistant, and you shouldn’t have to feel forced when asking it for help. Unfortunately, that just wasn’t the case in my testing.

When the AI Pin did understand me and answer correctly, it usually took a few seconds to reply, in which time I could have already gotten the same results on my phone. For a few things, like adding items to my shopping list or converting Canadian dollars to USD, it performed adequately. But “adequate” seems to be the best case scenario.

Sometimes the answers were too long or irrelevant. When I asked “Should I watch Dream Scenario,” it said “Dream Scenario is a 2023 comedy/fantasy film featuring Nicolas Cage, with positive ratings on IMDb, Rotten Tomatoes and Metacritic. It’s available for streaming on platforms like YouTube, Hulu and Amazon Prime Video. If you enjoy comedy and fantasy genres, it may be worth watching.”

Setting aside the fact that the “answer” to my query came after a lot of preamble I found unnecessary, I also just didn’t find the recommendation satisfying. It wasn’t giving me a straight answer, which is understandable, but ultimately none of what it said felt different from scanning the top results of a Google search. I would have gleaned more info had I looked the film up on my phone, since I’d be able to see the actual Rotten Tomatoes and Metacritic scores.

To be fair, the AI Pin was smart enough to understand follow-ups like “How about The Witch” without needing me to repeat my original question. But it’s 2024; we’re way past assistants that need so much hand-holding.

We’re also past the days of needing to word our requests in specific ways for AI to understand us. Though Humane has said you can speak to the pin “naturally,” there are some instances when that just didn’t work. First, it occasionally misheard me, even in my quiet living room. When I asked “Would I like YouTuber Danny Gonzalez,” it thought I said “would I like YouTube do I need Gonzalez” and responded “It’s unclear if you would like Dulce Gonzalez as the content of their videos and channels is not specified.”

When I repeated myself by carefully saying “I meant Danny Gonzalez,” the AI Pin spouted back facts about the YouTuber’s life and work, but did not answer my original question.

That’s not as bad as the fact that when I tried to get the Pin to describe what was in front of me, it simply would not. Humane has a Vision feature in beta that’s meant to let the AI Pin use its camera to see and analyze things in view, but when I tried to get it to look at my messy kitchen island, nothing happened. I’d ask “What’s in front of me” or “What am I holding out in front of you” or “Describe what’s in front of me,” which is how I’d phrase this request naturally. I tried so many variations of this, including “What am I looking at” and “Is there an octopus in front of me,” to no avail. I even took a photo and asked “can you describe what’s in that picture.”

Every time, I was told “Your AI Pin is not sure what you’re referring to” or “This question is not related to AI Pin” or, in the case where I first took a picture, “Your AI Pin is unable to analyze images or describe them.” I was confused why this wasn’t working even after I double checked that I had opted in and enabled the feature, and finally realized after checking the reviewers' guide that I had to use prompts that started with the word “Look.”

Look, maybe everyone else would have instinctively used that phrasing. But if you’re like me and didn’t, you’ll probably give up and never use this feature again. Even after I learned how to properly phrase my Vision requests, they were still clunky as hell. It was never as easy as “Look for my socks” but required two-part sentences like “Look at my room and tell me if there are boots in it” or “Look at this thing and tell me how to use it.”

When I worded things just right, results were fairly impressive. It confirmed there was a “Lysol can on the top shelf of the shelving unit” and a “purple octopus on top of the brown cabinet.” I held out a cheek highlighter and asked what to do with it. The AI Pin accurately told me “The Carry On 2 cream by BYBI Beauty can be used to add a natural glow to skin,” among other things, although it never explicitly told me to apply it to my face. I asked it where an object I was holding came from, and it just said “The image is of a hand holding a bag of mini eggs. The bag is yellow with a purple label that says ‘mini eggs.’” Again, it didn't answer my actual question.

Humane’s AI, which is powered by a mix of OpenAI’s recent versions of GPT and other sources including its own models, just doesn’t feel fully baked. It’s like a robot pretending to be sentient — capable of indicating it sort of knows what I’m asking, but incapable of delivering a direct answer.

My issues with the AI Pin’s language model and features don’t end there. Sometimes it just refuses to do what I ask of it, like restart or shut down. Other times it does something entirely unexpected. When I said “Send a text message to Julian Chokkattu,” who’s a friend and fellow AI Pin reviewer over at Wired, I thought I’d be asked what I wanted to tell him. Instead, the device simply said OK and told me it sent the words “Hey Julian, just checking in. How's your day going?” to Chokkattu. I've never said anything like that to him in our years of friendship, but I guess technically the AI Pin did do what I asked.

If only voice interactions were the worst thing about the Humane AI Pin, but the list of problems only starts there. I was most intrigued by the company’s “pioneering Laser Ink display” that projects green rays onto your palm, as well as the gestures that enabled interaction with “onscreen” elements. But my initial wonder quickly gave way to frustration and a dull ache in my shoulder. It might be tiring to hold up your phone to scroll through Instagram, but at least you can set that down on a table and continue browsing. With the AI Pin, if your arm is not up, you’re not seeing anything.

Then there’s the fact that it’s a pretty small canvas. I would see about seven lines of text each time, with about one to three words on each row depending on the length. This meant I had to hold my hand up even longer so I could wait for notifications to finish scrolling through. I also have a smaller palm than some other reviewers I saw while testing the AI Pin. Julian over at Wired has a larger hand and I was downright jealous when I saw he was able to fit the entire projection onto his palm, whereas the contents of my display would spill over onto my fingers, making things hard to read.

It’s not just those of us afflicted with tiny palms that will find the AI Pin tricky to see. Step outside and you’ll have a hard time reading the faint projection. Even on a cloudy, rainy day in New York City, I could barely make out the words on my hands.

When you can read what’s on the screen, interacting with it might make you want to rip your eyes out. Like I said, you’ll have to move your palm closer and further to your chest to select the right cards to enter your passcode. It’s a bit like dialing a rotary phone, with cards for individual digits from 0 to 9. Go further away to get to the higher numbers and the backspace button, and come back for the smaller ones.

This gesture is smart in theory but it’s very sensitive. There’s a very small range of usable space since there is only so far your hand can go, so the distance between each digit is fairly small. One wrong move and you’ll accidentally select something you didn’t want and have to go all the way out to delete it. To top it all off, moving my arm around while doing that causes the Pin to flop about, meaning the screen shakes on my palm, too. On average, unlocking my Pin, which involves entering a four-digit passcode, took me about five seconds.

On its own, this doesn’t sound so bad, but bear in mind that you’ll have to re-enter this each time you disconnect the Pin from the booster, latch or clip. It’s currently springtime in New York, which means I’m putting on and taking off my jacket over and over again. Every time I go inside or out, I move the Pin to a different layer and have to look like a confused long-sighted tourist reading my palm at various distances. It’s not fun.

Of course, you can turn off the setting that requires password entry each time you remove the Pin, but that’s simply not great for security.

Though Humane says “privacy and transparency are paramount with AI Pin,” by its very nature the device isn’t suitable for performing confidential tasks unless you’re alone. You don’t want to dictate a sensitive message to your accountant or partner in public, nor might you want to speak your Wi-Fi password out loud.

That latter is one of two input methods for setting up an internet connection, by the way. If you choose not to spell your Wi-Fi key out loud, then you can go to the Humane website to type in your network name (spell it out yourself, not look for one that’s available) and password to generate a QR code for the Pin to scan. Having to verbally relay alphanumeric characters to the Pin is not ideal, and though the QR code technically works, it just involves too much effort. It’s like giving someone a spork when they asked for a knife and fork: good enough to get by, but not a perfect replacement.

Since communicating through speech is the easiest means of using the Pin, you’ll need to be verbal and have hearing. If you choose not to raise your hand to read the AI Pin’s responses, you’ll have to listen for it. The good news is, the onboard speaker is usually loud enough for most environments, and I only struggled to hear it on NYC streets with heavy traffic passing by. I never attempted to talk to it on the subway, however, nor did I obnoxiously play music from the device while I was outside.

In my office and gym, though, I did get the AI Pin to play some songs. The music sounded fine — I didn’t get thumping bass or particularly crisp vocals, but I could hear instruments and crooners easily. Compared to my iPhone 15 Pro Max, it’s a bit tinny, as expected, but not drastically worse.

The problem is there are, once again, some caveats. The most important of these is that at the moment, you can only use Tidal’s paid streaming service with the Pin. You’ll get 90 days free with your purchase, and then have to pay $11 a month (on top of the $24 you already give to Humane) to continue streaming tunes from your Pin. Humane hasn’t said yet if other music services will eventually be supported, either, so unless you’re already on Tidal, listening to music from the Pin might just not be worth the price. Annoyingly, Tidal also doesn’t have the extensive library that competing providers do, so I couldn’t even play songs like Beyonce’s latest album or Taylor Swift’s discography (although remixes of her songs were available).

Though Humane has described its “personic speaker” as being able to create a “bubble of sound,” that “bubble” certainly has a permeable membrane. People around you will definitely hear what you’re playing, so unless you’re trying to start a dance party, it might be too disruptive to use the AI Pin for music without pairing Bluetooth headphones. You’ll also probably get better sound quality from Bose, Beats or AirPods anyway.

I’ll admit it — a large part of why I was excited for the AI Pin is its onboard camera. My love for taking photos is well-documented, and with the Pin, snapping a shot is supposed to be as easy as double-tapping its face with two fingers. I was even ready to put up with subpar pictures from its 13-megapixel sensor for the ability to quickly capture a scene without having to first whip out my phone.

Sadly, the Humane AI Pin was simply too slow and feverish to deliver on that premise. I frequently ran into times when, after taking a bunch of photos and holding my palm up to see how each snap turned out, the device would get uncomfortably warm. At least twice in my testing, the Pin just shouted “Your AI Pin is too warm and needs to cool down” before shutting down.

Even when it’s running normally, using the AI Pin’s camera is slow. I’d double tap it and then have to stand still for at least three seconds before it would take the shot. I appreciate that there’s audio and visual feedback through the flashing green lights and the sound of a shutter clicking when the camera is going, so both you and people around know you’re recording. But it’s also a reminder of how long I need to wait — the “shutter” sound will need to go off thrice before the image is saved.

I took photos and videos in various situations under different lighting conditions, from a birthday dinner in a dimly lit restaurant to a beautiful park on a cloudy day. I recorded some workout footage in my building’s gym with large windows, and in general anything taken with adequate light looked good enough to post. The videos might make viewers a little motion sick, since the camera was clipped to my sports bra and moved around with me, but that’s tolerable.

In dark environments, though, forget about it. Even my Nokia E7 from 2012 delivered clearer pictures, most likely because I could hold it steady while framing a shot. The photos of my friends at dinner were so grainy, one person even seemed translucent. To my knowledge, that buddy is not a ghost, either.

To its credit, Humane’s camera has a generous 120-degree field of view, meaning you’ll capture just about anything in front of you. When you’re not sure if you’ve gotten your subject in the picture, you can hold up your palm after taking the shot, and the projector will beam a monochromatic preview so you can verify. It’s not really for you to admire your skilled composition or level of detail, and more just to see that you did indeed manage to get the receipt in view before moving on.

When it comes time to retrieve those pictures off the AI Pin, you’ll just need to navigate to humane.center in any browser and sign in. There, you’ll find your photos and videos under “Captures,” your notes, recently played music and calls, as well as every interaction you’ve had with the assistant. That last one made recalling every weird exchange with the AI Pin for this review very easy.

You’ll have to make sure the AI Pin is connected to Wi-Fi and power, and be at least 50 percent charged before full-resolution photos and videos will upload to the dashboard. But before that, you can still scroll through previews in a gallery, even though you can’t download or share them.

The web portal is fairly rudimentary, with large square tiles serving as cards for sections like “Captures,” “Notes” and “My Data.” Going through them just shows you things you’ve saved or asked the Pin to remember, like a friend’s favorite color or their birthday. Importantly, there isn’t an area for you to view your text messages, so if you wanted to type out a reply from your laptop instead of dictating to the Pin, sorry, you can’t. The only way to view messages is by putting on the Pin, pulling up the screen and navigating the onboard menus to find them.

That brings me to what you see on the AI Pin’s visual interface. If you’ve raised your palm right after asking it something, you’ll see your answer in text form. But if you had brought up your hand after unlocking or tapping the device, you’ll see its barebones home screen. This contains three main elements — a clock widget in the middle, the word “Nearby” in a bubble at the top and notifications at the bottom. Tilting your palm scrolls through these, and you can pinch your index finger and thumb together to select things.

Push your hand further back and you’ll bring up a menu with five circles that will lead you to messages, phone, settings, camera and media player. You’ll need to tilt your palm to scroll through these, but because they’re laid out in a ring, it’s not as straightforward as simply aiming up or down. Trying to get the right target here was one of the greatest challenges I encountered while testing the AI Pin. I was rarely able to land on the right option on my first attempt. That, along with the fact that you have to put on the Pin (and unlock it), made it so difficult to see messages that I eventually just gave up looking at texts I received.

One reason I sometimes took off the AI Pin is that it would frequently get too warm and need to “cool down.” Once I removed it, I would not feel the urge to put it back on. I did wear it a lot in the first few days I had it, typically from 7:45AM when I headed out to the gym till evening, depending on what I was up to. Usually at about 3PM, after taking a lot of pictures and video, I would be told my AI Pin’s battery was running low, and I’d need to swap out the battery booster. This didn’t seem to work sometimes, with the Pin dying before it could get enough power through the accessory. At first it appeared the device simply wouldn’t detect the booster, but I later learned it’s just slow and can take up to five minutes to recognize a newly attached booster.

When I wore the AI Pin to my friend (and fellow reviewer) Michael Fisher’s birthday party just hours after unboxing it, I had it clipped to my tank top just hovering above my heart. Because it was so close to the edge of my shirt, I would accidentally brush past it a few times when reaching for a drink or resting my chin on my palm a la The Thinker. Normally, I wouldn’t have noticed the Pin, but as it was running so hot, I felt burned every time my skin came into contact with its chrome edges. The touchpad also grew warm with use, and the battery booster resting against my chest also got noticeably toasty (though it never actually left a mark).

Part of the reason the AI Pin ran so hot is likely that there’s not a lot of room for the heat generated by its octa-core Snapdragon processor to dissipate. I had also been using it near constantly to show my companions the pictures I had taken, and Humane has said its laser projector is “designed for brief interactions (up to six to nine minutes), not prolonged usage” and that it had “intentionally set conservative thermal limits for this first release that may cause it to need to cool down.” The company added that it not only plans to “improve uninterrupted run time in our next software release,” but also that it’s “working to improve overall thermal performance in the next software release.”

There are other things I need Humane to address via software updates ASAP. The fact that its AI sometimes decides not to do what I ask, like telling me “Your AI Pin is already running smoothly, no need to restart” when I asked it to restart is not only surprising but limiting. There are no hardware buttons to turn the pin on or off, and the only other way to trigger a restart is to pull up the dreaded screen, painstakingly go to the menu, hopefully land on settings and find the Power option. By which point if the Pin hasn’t shut down my arm will have.

A lot of my interactions with the AI Pin also felt like problems I encountered with earlier versions of Siri, Alexa and the Google Assistant. The overly wordy answers, for example, or the pronounced two or three-second delay before a response, are all reminiscent of the early 2010s. When I asked the AI Pin to “remember that I parked my car right here,” it just saved a note saying “Your car is parked right here,” with no GPS information or no way to navigate back. So I guess I parked my car on a sticky note.

To be clear, that’s not something that Humane ever said the AI Pin can do, but it feels like such an easy thing to offer, especially since the device does have onboard GPS. Google’s made entire lines of bags and Levi’s jackets that serve the very purpose of dropping pins to revisit places later. If your product is meant to be smart and revolutionary, it should at least be able to do what its competitors already can, not to mention offer features they don’t.

One singular thing that the AI Pin actually manages to do competently is act as an interpreter. After you ask it to “translate to [x language],” you’ll have to hold down two fingers while you talk, let go and it will read out what you said in the relevant tongue. I tried talking to myself in English and Mandarin, and was frankly impressed with not only the accuracy of the translation and general vocal expressiveness, but also at how fast responses came through. You don’t even need to specify the language the speaker is using. As long as you’ve set the target language, the person talking in Mandarin will be translated to English and the words said in English will be read out in Mandarin.

It’s worth considering the fact that using the AI Pin is a nightmare for anyone who gets self-conscious. I’m pretty thick-skinned, but even I tried to hide the fact that I had a strange gadget with a camera pinned to my person. Luckily, I didn’t get any obvious stares or confrontations, but I heard from my fellow reviewers that they did. And as much as I like the idea of a second brain I can wear and offload little notes and reminders to, nothing that the AI Pin does well is actually executed better than a smartphone.

Not only is the Humane AI Pin slow, finicky and barely even smart, using it made me look pretty dumb. In a few days of testing, I went from being excited to show it off to my friends to not having any reason to wear it.

Humane’s vision was ambitious, and the laser projector initially felt like a marvel. At first glance, it looked and felt like a refined product. But it just seems like at every turn, the company had to come up with solutions to problems it created. No screen or keyboard to enter your Wi-Fi password? No worries, use your phone or laptop to generate a QR code. Want to play music? Here you go, a 90-day subscription to Tidal, but you can only play music on that service.

The company promises to make software updates that could improve some issues, and the few tweaks my unit received during this review did make some things (like music playback) work better. The problem is that as it stands, the AI Pin doesn’t do enough to justify its $700 and $24-a-month price, and I simply cannot recommend anyone spend this much money for the one or two things it does adequately.

Maybe in time, the AI Pin will be worth revisiting, but it’s hard to imagine why anyone would need a screenless AI wearable when so many devices exist today that you can use to talk to an assistant. From speakers and phones to smartwatches and cars, the world is full of useful AI access points that allow you to ditch a screen. Humane says it’s committed to a “future where AI seamlessly integrates into every aspect of our lives and enhances our daily experiences.”

After testing the company’s AI Pin, that future feels pretty far away.

Subscribe to our two newsletters:

– A weekly roundup of our favorite tech deals

– A daily dose of the news you need

Please enter a valid email address

Please select a newsletter

By subscribing, you are agreeing to Engadget's Terms and Privacy Policy.

Tech News

North Carolina Assistive Technology Program | NCDHHS – NCDHHS

State Government websites value user privacy. To learn more, view our full privacy policy.

Secure websites use HTTPS certificates. A lock icon or https:// means you’ve safely connected to the official website.

Main menu

Want to learn how technology can help you connect to people, activities and your community? Don’t miss our Accessibility for All live event series, every Thursday at 11:30 a.m.

NCATP offers AAC and AT assessment services for children and adults.

NCATP recommends low tech devices to meet the needs of our consumers.

NCATP has 9 centers that serve North Carolinians from the mountains to the coast.

NCATP provides demonstrations of assistive technology and short-term loans of equipment. Our staff are available to assist you.

Keep up with the events hosted by NCATP through our Events Calendar.

Don’t miss our Adaptive Recreation and Active Living Resource Fairs coming up soon!

Learn about local resources and get hands-on experience with accessible equipment at an Adaptive Recreation and Active Living Resource Fair near you. All fairs are free and open to the public. We encourage everyone interested in staying active and accessible recreation and leisure activities to attend. More information.

The North Carolina Assistive Technology Program (NCATP) is a state and federally funded program that provides assistive technology services statewide to people of all ages and abilities. NCATP leads North Carolina’s efforts to carry out the federal Assistive Technology Act of 2004 by providing device demonstration, short-term device loans, and reutilization of assistive technology. We promote independence for people with disabilities through access to technology.

Sign up for a BEAM Tour

NCATP Administrative Offices

2801 Mail Service Center

Raleigh, NC 27699-2801

Phone: 919-855-3500

Confidential Fax: 919-715-1776

NCATP is partially funded by Grant Number 1601NCSGAT from the Administration for Community Living. The contents of this website are solely the responsibility of the authors and do not necessarily represent the official views of the Administration for Community Living.

Administration of Community Living Award Information

NC Department of Health and Human Services

2001 Mail Service Center

Raleigh, NC 27699-2000

Customer Service Center: 1-800-662-7030

Visit RelayNC for information about TTY services.

Tech News

Does technology help or hurt employment? | MIT News | Massachusetts Institute of Technology – MIT News

Suggestions or feedback?

Images for download on the MIT News office website are made available to non-commercial entities, press and the general public under a Creative Commons Attribution Non-Commercial No Derivatives license. You may not alter the images provided, other than to crop them to size. A credit line must be used when reproducing images; if one is not provided below, credit the images to “MIT.”

This is part 2 of a two-part MIT News feature examining new job creation in the U.S. since 1940, based on new research from Ford Professor of Economics David Autor. Part 1 is available here.

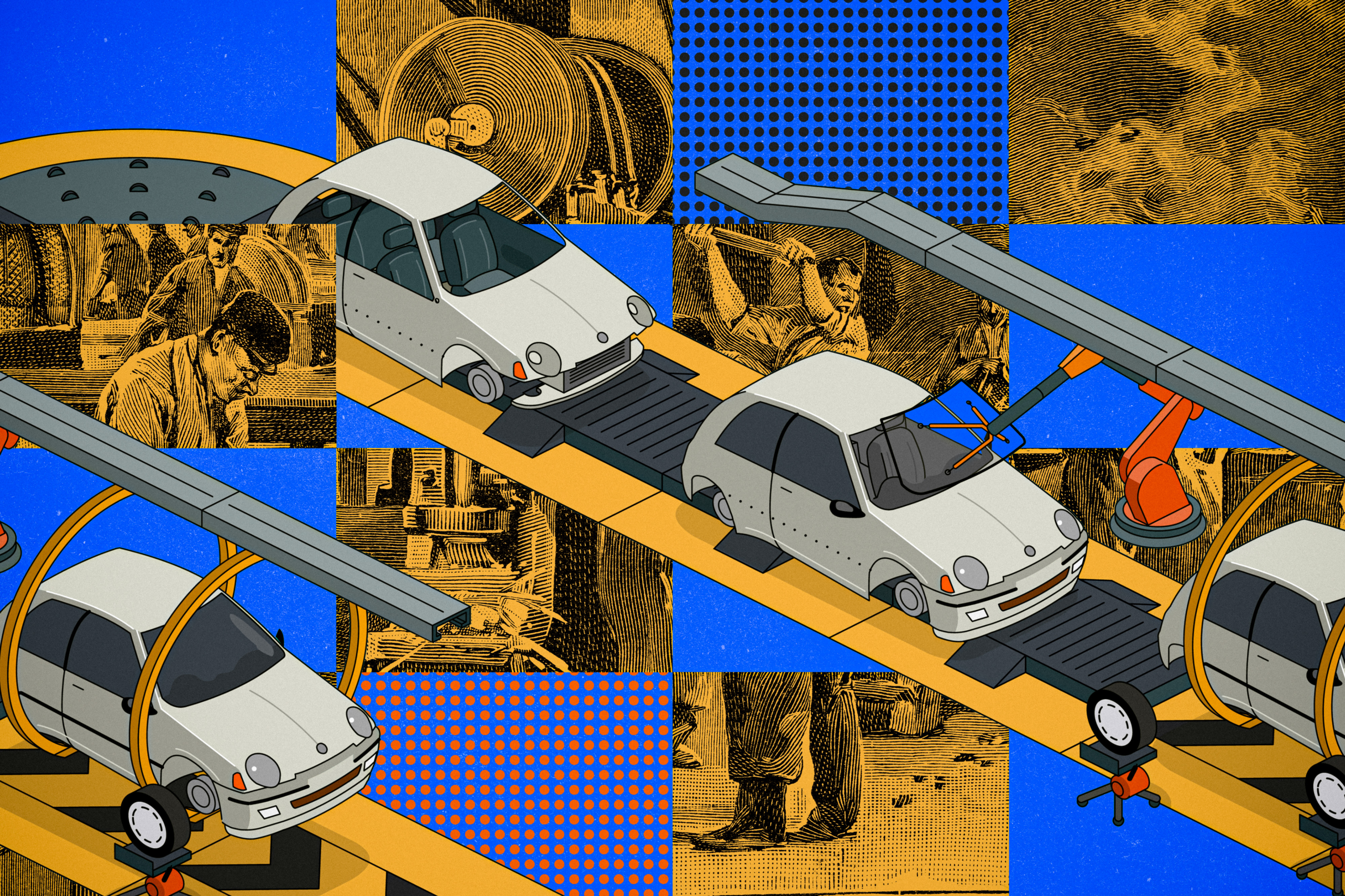

Ever since the Luddites were destroying machine looms, it has been obvious that new technologies can wipe out jobs. But technical innovations also create new jobs: Consider a computer programmer, or someone installing solar panels on a roof.

Overall, does technology replace more jobs than it creates? What is the net balance between these two things? Until now, that has not been measured. But a new research project led by MIT economist David Autor has developed an answer, at least for U.S. history since 1940.

The study uses new methods to examine how many jobs have been lost to machine automation, and how many have been generated through “augmentation,” in which technology creates new tasks. On net, the study finds, and particularly since 1980, technology has replaced more U.S. jobs than it has generated.

“There does appear to be a faster rate of automation, and a slower rate of augmentation, in the last four decades, from 1980 to the present, than in the four decades prior,” says Autor, co-author of a newly published paper detailing the results.

However, that finding is only one of the study’s advances. The researchers have also developed an entirely new method for studying the issue, based on an analysis of tens of thousands of U.S. census job categories in relation to a comprehensive look at the text of U.S. patents over the last century. That has allowed them, for the first time, to quantify the effects of technology over both job loss and job creation.

Previously, scholars had largely just been able to quantify job losses produced by new technologies, not job gains.

“I feel like a paleontologist who was looking for dinosaur bones that we thought must have existed, but had not been able to find until now,” Autor says. “I think this research breaks ground on things that we suspected were true, but we did not have direct proof of them before this study.”

The paper, “New Frontiers: The Origins and Content of New Work, 1940-2018,” appears in the Quarterly Journal of Economics. The co-authors are Autor, the Ford Professor of Economics; Caroline Chin, a PhD student in economics at MIT; Anna Salomons, a professor in the School of Economics at Utrecht University; and Bryan Seegmiller SM ’20, PhD ’22, an assistant professor at the Kellogg School of Northwestern University.

Automation versus augmentation

The study finds that overall, about 60 percent of jobs in the U.S. represent new types of work, which have been created since 1940. A century ago, that computer programmer may have been working on a farm.

To determine this, Autor and his colleagues combed through about 35,000 job categories listed in the U.S. Census Bureau reports, tracking how they emerge over time. They also used natural language processing tools to analyze the text of every U.S. patent filed since 1920. The research examined how words were “embedded” in the census and patent documents to unearth related passages of text. That allowed them to determine links between new technologies and their effects on employment.

“You can think of automation as a machine that takes a job’s inputs and does it for the worker,” Autor explains. “We think of augmentation as a technology that increases the variety of things that people can do, the quality of things people can do, or their productivity.”

From about 1940 through 1980, for instance, jobs like elevator operator and typesetter tended to get automated. But at the same time, more workers filled roles such as shipping and receiving clerks, buyers and department heads, and civil and aeronautical engineers, where technology created a need for more employees.

From 1980 through 2018, the ranks of cabinetmakers and machinists, among others, have been thinned by automation, while, for instance, industrial engineers, and operations and systems researchers and analysts, have enjoyed growth.

Ultimately, the research suggests that the negative effects of automation on employment were more than twice as great in the 1980-2018 period as in the 1940-1980 period. There was a more modest, and positive, change in the effect of augmentation on employment in 1980-2018, as compared to 1940-1980.

“There’s no law these things have to be one-for-one balanced, although there’s been no period where we haven’t also created new work,” Autor observes.

What will AI do?

The research also uncovers many nuances in this process, though, since automation and augmentation often occur within the same industries. It is not just that technology decimates the ranks of farmers while creating air traffic controllers. Within the same large manufacturing firm, for example, there may be fewer machinists but more systems analysts.

Relatedly, over the last 40 years, technological trends have exacerbated a gap in wages in the U.S., with highly educated professionals being more likely to work in new fields, which themselves are split between high-paying and lower-income jobs.

“The new work is bifurcated,” Autor says. “As old work has been erased in the middle, new work has grown on either side.”

As the research also shows, technology is not the only thing driving new work. Demographic shifts also lie behind growth in numerous sectors of the service industries. Intriguingly, the new research also suggests that large-scale consumer demand also drives technological innovation. Inventions are not just supplied by bright people thinking outside the box, but in response to clear societal needs.

The 80 years of data also suggest that future pathways for innovation, and the employment implications, are hard to forecast. Consider the possible uses of AI in workplaces.

“AI is really different,” Autor says. “It may substitute some high-skill expertise but may complement decision-making tasks. I think we’re in an era where we have this new tool and we don’t know what’s good for. New technologies have strengths and weaknesses and it takes a while to figure them out. GPS was invented for military purposes, and it took decades for it to be in smartphones.”

He adds: “We’re hoping our research approach gives us the ability to say more about that going forward.”

As Autor recognizes, there is room for the research team’s methods to be further refined. For now, he believes the research open up new ground for study.

“The missing link was documenting and quantifying how much technology augments people’s jobs,” Autor says. “All the prior measures just showed automation and its effects on displacing workers. We were amazed we could identify, classify, and quantify augmentation. So that itself, to me, is pretty foundational.”

Support for the research was provided, in part, by The Carnegie Corporation; Google; Instituut Gak; the MIT Work of the Future Task Force; Schmidt Futures; the Smith Richardson Foundation; and the Washington Center for Equitable Growth.

Fast Company reporter Shalene Gupta spotlights new research by Prof. David Autor that finds “about 60% of jobs in 2018 did not exist 1940. Since 1940, the bulk of new jobs has shifted from middle-class production and clerical jobs to high-paid professional jobs and low-paid service jobs.” Additionally, the researchers uncovered evidence that “automation eroded twice as many jobs from 1980 to 2018 as it had from 1940 to 1980. While augmentation did add some jobs to the economy, it was not as many as the ones lost by automation.”

Read full story →

Read full story →

Read full story →

Read full story →

Read full story →

Read full story →

This website is managed by the MIT News Office, part of the Institute Office of Communications.

Massachusetts Institute of Technology

77 Massachusetts Avenue, Cambridge, MA, USA

Tech News

Reaction to Supreme Court decision in web design case – Spectrum News NY1

Get the best experience and stay connected to your community with our Spectrum News app. Learn More

Continue in Browser

Get hyperlocal forecasts, radar and weather alerts.

Please enter a valid zipcode.

Save

Democrats and LGBTQ+ advocates on Friday condemned a Supreme Court decision siding with a Colorado web designer who argued she should be able to refuse to build wedding websites for same-sex couples. Republicans, meanwhile, praised the ruling as a victory for religious freedom.

In a 6-3 decision, the court ruled that a Colorado law that forbids businesses open to the public from discriminating against gay people violated web designer Lorie Smith’s free-speech rights. Smith, who owns the graphic design firm 303 Creative, argued that working with same-sex couples would go against her Christian faith.

President Joe Biden called the ruling “disappointing” because he said it undermines that “no person should face discrimination simply because of who they are or who they love.”

While the decision only applies to businesses that perform creative services, Biden said he fears it could “invite more discrimination against LGBTQI+ Americans.”

The president added that his administration remains committed to working with federal law enforcement to protect Americans from discrimination based on gender identity or sexual orientation and will work with states to combat attempts to roll back civil rights protections.

“When one group’s dignity and equality are threatened, the promise of our democracy is threatened and we all suffer,” Biden said.

Senate Majority Leader Chuck Schumer, D-N.Y., called the ruling “a giant step backward for human rights and equal protection in the United States.”

“Refusing service based on whom someone loves is just as bigoted and hateful as refusing service because of race or religion,” he said in a statement. “And this is bigotry that the vast majority of Americans find completely unacceptable.”

Kelley Robinson, president of the Human Rights Campaign, an LGBTQ+ advocacy group, called the decision “dangerous” because it will give “some businesses the power to discriminate against people simply because of who we are.”

“People deserve to have commercial spaces that are safe and welcoming,” she said. “This decision continues to affirm how radical and out-of-touch this Court is.”

Rep. Robert Garcia, D-Calif., who is openly gay, called it “a very dark day for our community.”

“This is a devastating ruling for the LGBTQ+ community,” he said in an interview with MSNBC. “We just spent a month celebrating, trying to uplift pride. It’s already a very dark time for this community, where you have attacks happening on our community every day in Congress, in state legislatures. And to have this happen by a true extreme activist court to roll back a key protection against discriminating our community is shameful.

Rep. Bonnie Watson Coleman, D-N.J., also attacked the conservative-majority court while noting the case involved a hypothetical situation. Smith has never designed wedding websites. She argued she wants to expand her business but was concerned about running afoul of the Colorado law.

“This SCOTUS is out of control,” Coleman tweeted. “It’s now taking made up cases in order to push a radical agenda that is out of touch with the vast majority of the American people.”

Rep. Ayanna Pressley, D-Mass., meanwhile, directed a supportive tweet toward the LGBTQ community.

“Despite yet another callous ruling from this extreme Supreme Court, I want our LGBTQ siblings to know: We see you. We love you. We won’t stop fighting for you,” she wrote.

But Kristen Waggoner, president and CEO of the Alliance Defending Freedom, a conservative Christian legal advocacy group, said in a statement the Supreme Court made the right decision. ADF attorneys, including Waggoner, represented Smith in the case.

“The U.S. Supreme Court rightly reaffirmed that the government can’t force Americans to say things they don’t believe,” Waggoner said. “The court reiterated that it’s unconstitutional for the state to eliminate from the public square ideas it dislikes, including the belief that marriage is the union of husband and wife.

“Disagreement isn’t discrimination, and the government can’t mislabel speech as discrimination to censor it,” she added.

Sen. Ted Cruz, R-Texas, said laws should not compel business owners to use their speech in ways that contradict their faith.

“This law wasn’t just a threat to Christians either,” Cruz said in a statement. “Should a Muslim artist be compelled by the government to draw the image of Muhammed? Should Jewish artists be forced to create art that is antisemitic?”

On Twitter, Sen. Lindsey Graham, R-S.C., added, “Participating in commerce does not mean you should have to abandon your faith and individuals should not be compelled, by government, to act counter to their faith.”

Former Vice President Mike Pence, who is running for the Republican presidential nomination in 2024, said in a tweet, “Today Faith Won!”

“Freedom of Religion is the bedrock of our Constitution,” he wrote. “Today’s decision by the Supreme Court is a victory for the Religious Liberty of every American of every faith to live, work and worship according to their faith and conscience!

And former Ambassador to the United Nations Nikki Haley, a Republican who is also running for president, said she’s “glad we have a Supreme Court that respects our Constitution.”

“Unlike in other countries, we don’t force our citizens to express themselves in ways that conflict with their religious beliefs. It’s called the First Amendment,” Haley said in a statement.

-

General Knowledge2 years ago

General Knowledge2 years agoList of Indian States and Capital

-

General Knowledge2 years ago

General Knowledge2 years agoList Of 400 Famous Books and Authors

-

Important Days4 years ago

Important Days4 years agoImportant Days of Each Month

-

General Knowledge2 years ago

General Knowledge2 years agoCountries and their National Sports

-

General Knowledge3 years ago

General Knowledge3 years agoCountry Capital and Currency

-

Important Days3 years ago

Important Days3 years agoHoli

-

General Knowledge2 years ago

General Knowledge2 years agoList of Indian President

-

General Knowledge2 years ago

General Knowledge2 years agoList of Indian Vice President